Three Companies. Three Onboarding Problems. One Pattern.

I have worked at four companies with meaningful product design responsibilities. Three of them handed me an onboarding problem as one of my first significant assignments. At the time, I treated each one as a unique challenge. Looking back, they were all the same problem wearing different clothes.

The pattern: an organization builds a product, designs the onboarding experience for the version of the user who already understands the product, and then watches completion rates collapse when real users show up knowing nothing.

Here is how it played out three times across my career, what was different each time, and what stayed exactly the same.

- Netflix (2011–2012): International expansion onboarding across 7 markets, millions of users, DVD-to-streaming transition

- Appily.com, formerly Cappex (2015–2018): College application onboarding, 250K to 1.5M users, 47% completion vs. 20–35% industry

- TeleSign (2018–2023): Enterprise B2B onboarding, 67-day process reduced to 35 days, 85% self-service rate

Netflix: Testing Your Way to the Right First Step

At Netflix, the problem was not that the onboarding was bad. The problem was that it was built for a domestic user who had grown up with the product. International expansion meant onboarding users who had no existing Netflix context, in markets where streaming infrastructure was unreliable, with content libraries that did not yet match local expectations.

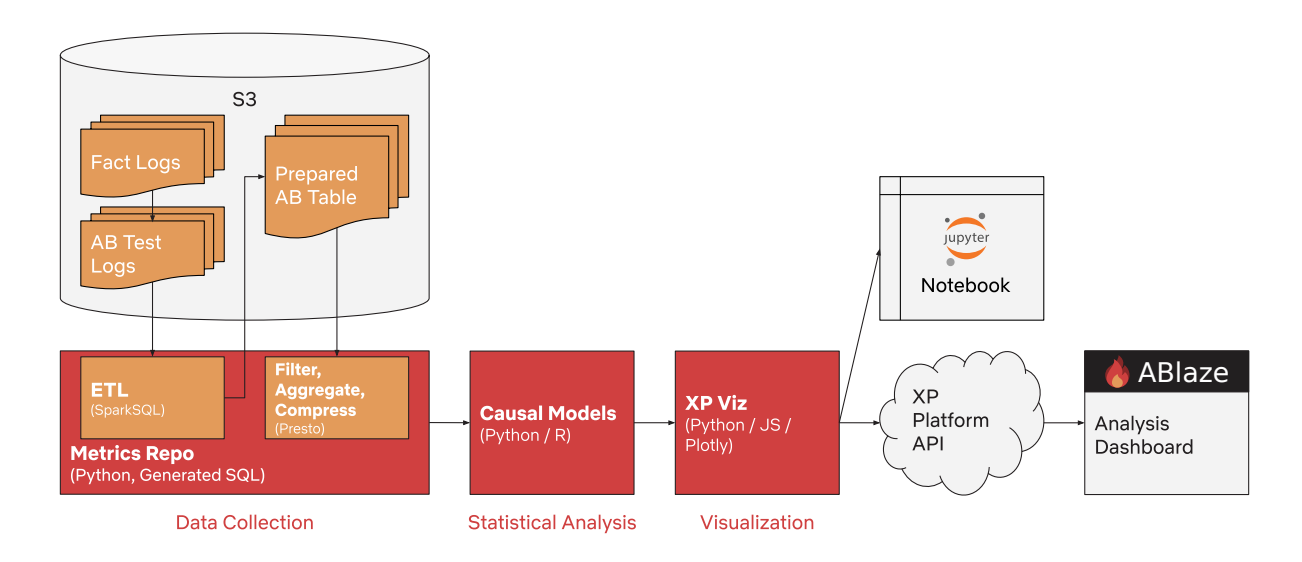

My role was in the experimentation platform, Netflix XP, during the expansion into 7 international markets. The foundational work was not redesigning the onboarding flow. It was building the infrastructure to test which version of the onboarding flow worked for which user in which market.

The lesson I took from Netflix: the right onboarding flow is not a design question. It is a measurement question. You form a hypothesis about what a new user needs to see and understand in the first session, you test it against a meaningful sample, and you let the data tell you whether you were right. Anything else is guessing at scale.

The testing methodology I helped establish at Netflix is still in use today. That is not something I say lightly. Multivariate testing across millions of users creates a feedback loop that no amount of user research can replicate. It is the only way to know.

Appily.com: The Form That Was Eating Students Alive

At Cappex (now Appily.com), the onboarding problem had a specific shape. Students were starting the Universal College Application and abandoning it. The industry-standard completion rate for college applications ranged from 20% to 35%. We were inside that range and needed to get out of it.

The research was unambiguous. I observed high school students attempting to complete the application in user testing sessions. What I watched was not confusion about form fields. It was cognitive overload. Students were tracking deadlines for eight colleges simultaneously, writing essays that needed to work universally, managing document uploads across multiple institutions, and doing all of this while carrying the psychological weight of what these applications meant for their lives.

The problem was not the interface. The interface was the last line of defense against a process that was fundamentally overwhelming. No amount of UX polish was going to fix that. The fix had to be structural.

What we built: one essay that submitted to every partner college. One profile that auto-filled across all applications. One dashboard that showed exactly what was missing and when it was due. The reduction in cognitive load was not incremental. It was categorical. A student applying to 10 colleges went from 30+ hours of effort to 6. We validated the 47% completion rate through structured A/B testing with a lead statistician. That number held up across multiple cohorts.

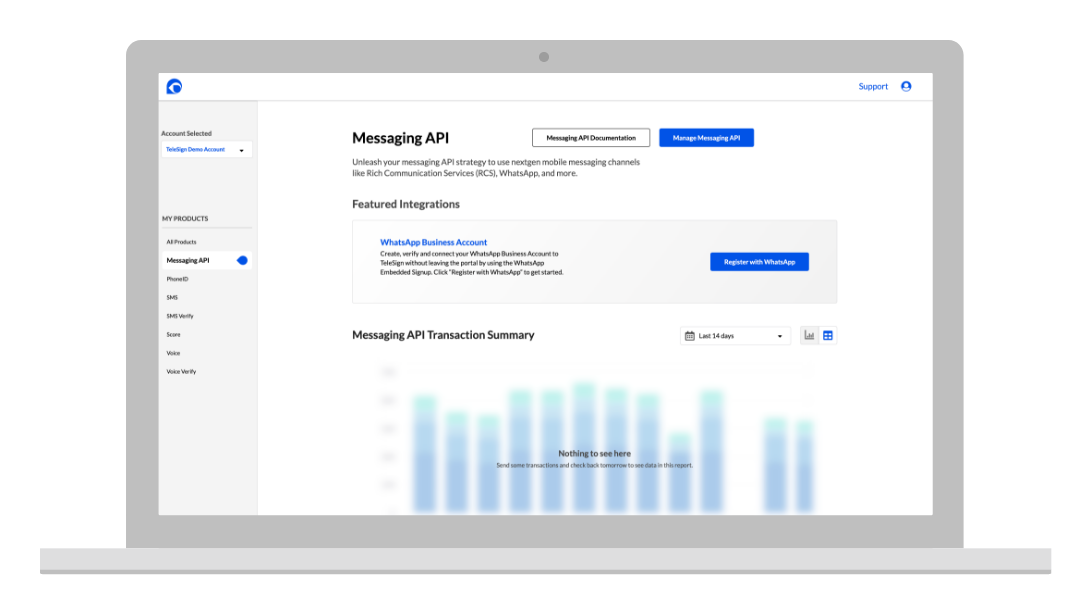

TeleSign: When Onboarding Is a Revenue Problem

The TeleSign version of this problem was the most complex and the most consequential in pure revenue terms.

When I arrived, enterprise onboarding averaged 67 days. Every day a customer was not live was a day TeleSign was not recognizing revenue from that account. The delay was not primarily a user experience failure. It was an operational failure. Customer success teams were manually handling every step: provisioning products, generating API keys, explaining documentation, managing country-specific regulatory requirements for 120+ countries with wildly different rules.

CS teams were spending 40% of their time on repetitive onboarding tasks. They were answering the same questions dozens of times per week. No single customer was asking an unreasonable question. The system just had no memory. Every new customer started from zero.

- 12 enterprise customers interviewed: consistent theme of not knowing what came next and feeling reluctant to bother the CS team with basic questions

- 1 week shadowing CS teams: same explanations delivered dozens of times, same manual provisioning steps repeated for every customer

- 40% of CS time consumed by tasks that could be automated

- 0 visibility for customers into their own onboarding status

The design solution was a self-service portal that moved provisioning, API key generation, phone number acquisition, billing, and documentation access entirely into the customer’s hands. Seven months. Distributed team. Pandemic constraints. 6am Pacific standups to overlap with European engineers.

The outcome: 67 days to 35 days. 85% of customers completing onboarding without any CS intervention. Daily transaction revenue growing from $500K to $2M+ over the same period. The CS team freed to focus on complex cases and customer success instead of first-week logistics.

The Pattern, Stated Plainly

Three different companies. Three different products. Three different user groups. The same root cause every time:

The product was built by people who already understood it, onboarded by processes designed for those same people, and then handed to users who had none of that context and no reliable way to get it.

At Netflix, the context gap was cultural and market-specific. The fix was measurement infrastructure.

At Cappex, the context gap was process complexity. Seventeen-year-olds were being asked to manage the operational complexity of applying to eight institutions simultaneously. The fix was removing work from their plate.

At TeleSign, the context gap was technical and regulatory. Fortune 500 engineering teams were being asked to navigate 120-country compliance requirements without a guide. The fix was encoding expert knowledge into the interface so the CS team did not have to deliver it manually for every customer.

In every case, the onboarding failure was downstream of an organizational assumption: that the user arrives with more context than they actually have. The design solution, in every case, was to close the gap between what the organization assumed the user knew and what the user actually knew.

What I Look For Now

When I pick up a new onboarding problem, I ask three questions before I touch a wireframe:

- Who built this product and for whom did they build it? The gap between those two answers is usually where the onboarding breaks.

- What does the user need to believe after the first session to come back for the second? Not what they need to know. What they need to believe. Confidence is a different design problem than comprehension.

- What work are we asking the user to do that the system could do for them? Every piece of friction in onboarding is a choice someone made. The choice is often invisible until you look for it explicitly.

The pattern will show up again in the next company I join. It always does. But recognizing it three times has made me faster at naming it and more credible when I explain to product and engineering why the first-session experience is not an afterthought. It is the moment the user decides whether to trust you.